Build an AI Assurance Plan That Delivers Measurable Impact

Kovrr’s AI Assurance Plan helps organizations strengthen oversight of both traditional and GenAI systems using framework-based scoring to identify which governance actions deliver the greatest improvement in control maturity and modeled exposure reduction. By linking safeguard gaps to financially quantified AI risk outcomes, Kovrr helps leaders allocate resources effectively and sustain long-term resilience.

The Gap Between AI Risk Insight and Action

AI governance teams have made progress assessing safeguards, yet many still struggle to translate those findings into measurable advancement. Without a structured way to prioritize improvements through AI Risk Quantification (AIRQ), organizations stay aware of their exposure but lack a practical path forward. AIRQ gives leaders a defensible basis for determining which improvements will reduce risk most effectively.

From AI Compliance Readiness to Action

Kovrr’s AI Assurance Plan builds directly on the AI Compliance Readiness module, using its safeguard maturity results to identify where improvements will deliver the highest return. This connection ensures that every gap revealed during readiness assessments becomes a clear, measurable step toward stronger GenAI and AI governance.

Define Where to Act First

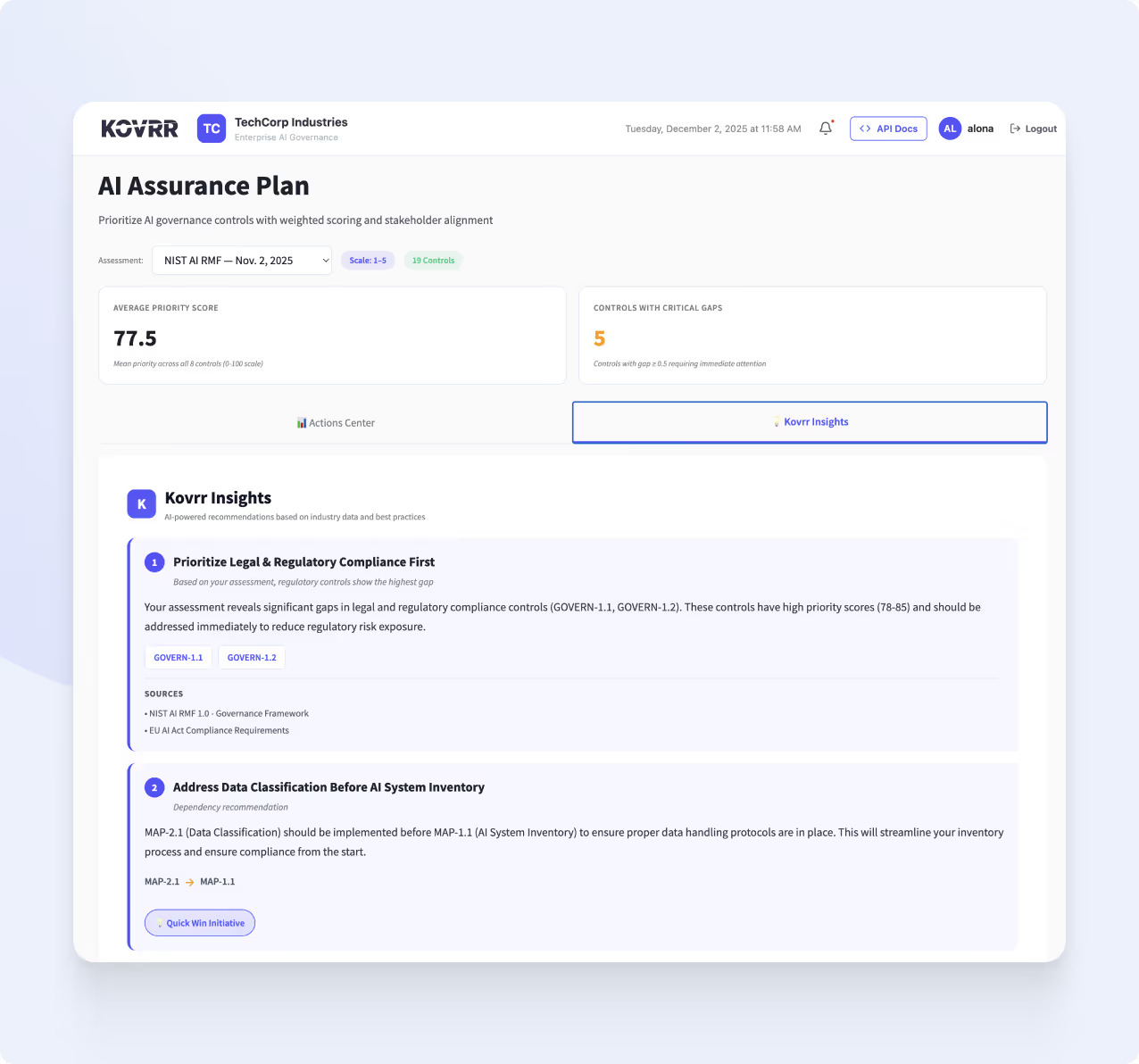

Kovrr’s AI Assurance Plan empowers teams to focus on the initiatives that drive the greatest measurable improvement. Rather than distributing resources evenly across all gaps, leaders can make targeted, evidence-based decisions guided by modeled outcomes.

Rank improvements by AI Risk Quantification (AIRQ) insights: Identify which actions yield the greatest reduction in financial exposure.

Link outcomes to ROI: Connect each improvement to its projected financial and operational benefits.

Eliminate guesswork: Replace subjective prioritization with transparent, monetary, and evidence-backed reasoning.

Guide long-term strategy: Build a roadmap that evolves with maturity progress, dependency sequencing, and shifting GenAI risk conditions.

Every improvement becomes traceable and aligned with leadership objectives, turning assurance planning into a financially-grounded, verifiable process.

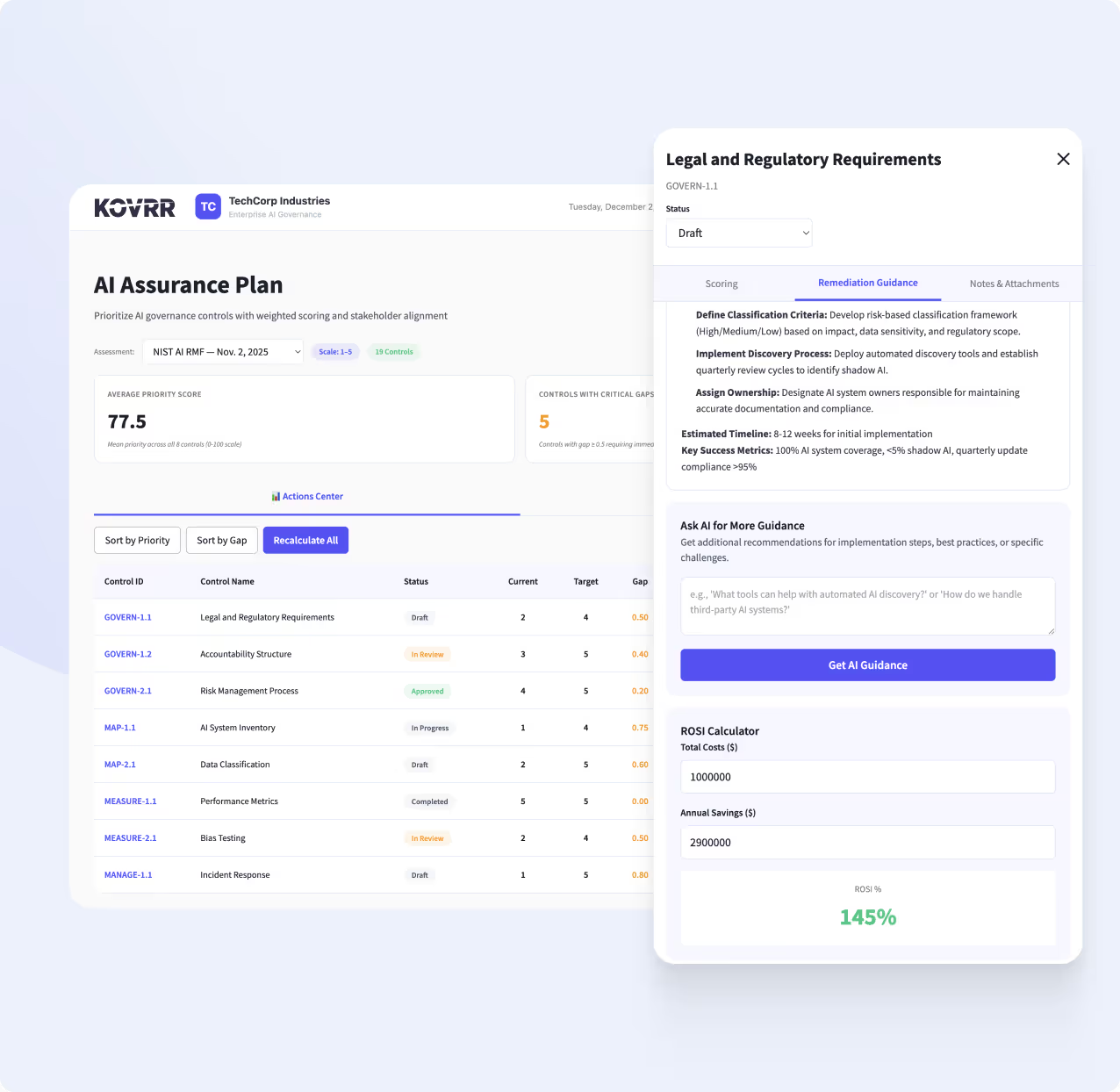

Quantify the Value of AI Governance Initiatives

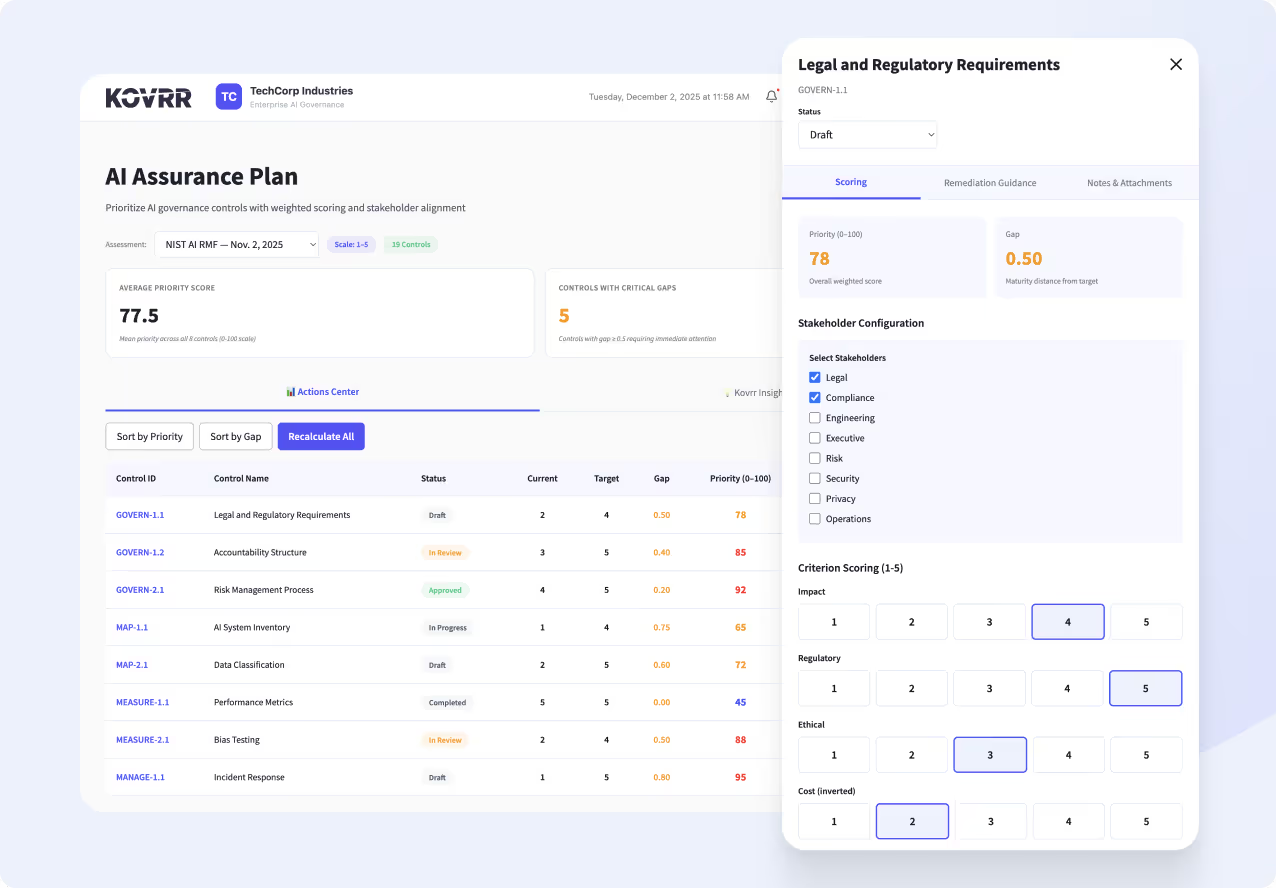

Kovrr’s AI Assurance Plan applies weighted prioritization to highlight which governance improvements create the most measurable progress. It shows how strengthening safeguards influences modeled exposure reduction and financial impact.

Attribute weighted impact scores to each AI framework control to evaluate its importance.

Compare how improvements shift assurance maturity and modeled financial exposure.

Leverage control prioritization results to plan and justify high-impact initiatives.

Support defensible investment decisions with structured, evidence-based insight.

This approach replaces subjective decision-making with data-driven prioritization, helping leaders focus on the safeguards that deliver the greatest financial value.

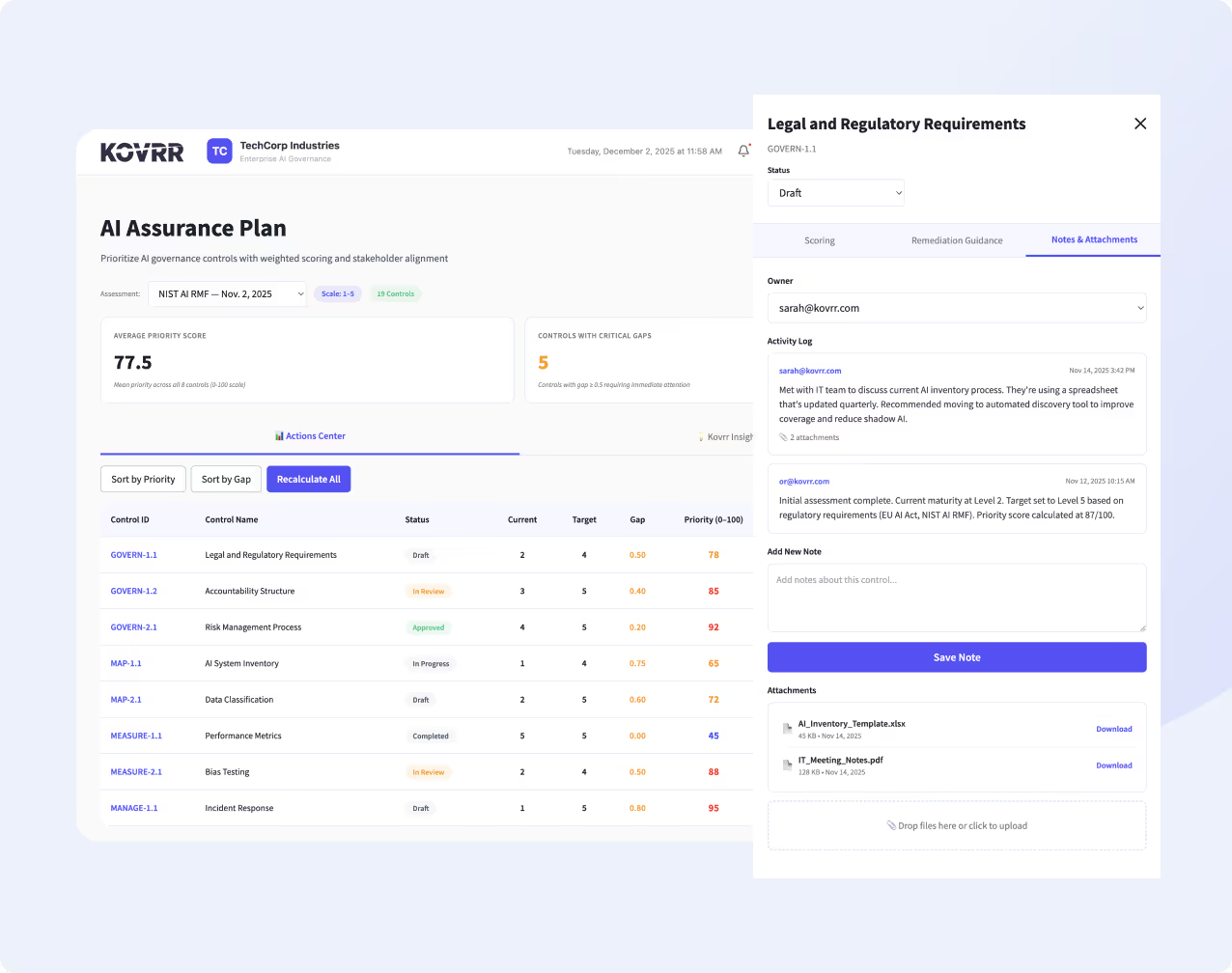

Centralize Governance Evidence With Notes and Attachments

The AI Assurance Plan includes a dedicated Notes and Attachments tab for uploading supporting documents, audit records, and policy references directly within the platform. This feature streamlines documentation, consolidates evidence in one place, and helps teams maintain transparency, simplify reviews, and demonstrate accountability as governance decisions evolve.

Plan for Maximum Financial Impact

Once improvement areas are defined, Kovrr’s AI Assurance Plan connects modeled financial outcomes to planning decisions, ensuring every initiative delivers tangible value.

Direct resources toward actions that yield the highest return in maturity advancement and risk reduction.

Track improvements through financially quantified metrics and dashboard views that demonstrate progress.

Align teams across risk, security, and compliance around shared, data-driven priorities.

Communicate performance through reports that clearly convey assurance outcomes to executives.

With Kovrr, planning becomes transparent and defensible, giving organizations confidence that every decision strengthens AI governance.

Make AI Assurance a Shared Responsibility

Kovrr’s AI Assurance Plan enables teams to assign stakeholders across functions, risk, security, compliance, and operations, ensuring everyone contributes to the assurance process. By grounding improvement initiatives in financially quantified metrics, the platform creates a common language for evaluating impact and prioritizing action across teams. With shared accountability and objective performance indicators, organizations build a coordinated governance framework.

Why Data-Driven Prioritization Matters

AI governance maturity doesn’t advance through intuition. Kovrr’s AI Assurance Plan replaces subjective judgment with quantified, evidence-based prioritization. By linking governance actions to modeled financial outcomes, the module gives leaders the insight to plan investments, demonstrate progress, and justify results with confidence. Every improvement becomes defensible, measurable, and aligned with enterprise objectives for long-term assurance and accountability.

From Insight to Measurable Outcomes

Kovrr’s AI Risk Quantification (AIRQ) module complements Maturity Gap Analysis, modeling how prioritized initiatives translate into measurable financial impact and maintaining a continuous feedback loop between governance strategy and quantified performance.

AI Assurance Plan FAQs

Schedule a DemoWhat is the Al Assurance Plan module?

Kovrr's Al Assurance Plan transforms Al and GenAl assessment results into an actionable roadmap for measurable improvement. By analyzing maturity deltas, control criticality, and contextual risk, the plan identifies which initiatives yield the greatest progress. The outcome is a ranked, data-driven improvement strategy that supports defensible oversight, informed budgeting, and continuous advancement across frameworks and regulations such as NIST AI RMF, ISO 42001, and the EU Al Act.

How does Kovrr determine which governance improvements matter most?

The Al Assurance Plan evaluates each safeguard through quantitative scoring, weighted factors, and dependency analysis. It accounts for implementation effort, regulatory urgency, and risk-reduction potential to produce a transparent, defensible ranking of actions. This structured approach ensures teams focus resources on the initiatives that deliver the highest assurance value and return on effort.

Which Al governance frameworks does the module align with?

Kovrr's methodology maps directly to leading Al governance and security frameworks, including NIST AI RMF and ISO 42001, and aligns with regulatory standards such as the EU Al Act. This alignment ensures every prioritized action supports both compliance and resilience objectives. The approach adapts to organizational context, allowing teams to demonstrate measurable progress toward internal benchmarks and external expectations.

How does the AI Assurance Plan connect to AI Risk Quantification (AIRQ)?

Kovrr’s AI Assurance Plan builds directly on AIRQ outputs. Governance gaps identified through assessments are evaluated based on their modeled financial impact and exposure reduction potential. This integration ensures that improvement initiatives are prioritized according to quantified risk outcomes, enabling organizations to focus resources on actions that deliver measurable, financially grounded risk reduction.